![ubuntu-logo]()

LAST UPDATED: 22nd April, 2014 / 0800 hours pkst

Youtube caching is working as of 22nd april, 2014, tested and confirmed

[1st version > 11th January, 2011]

What is LUSCA / SQUID ?

LUSCA is an advance version or Fork of SQUID 2. The Lusca project aims to fix the shortcomings in the Squid-2. It also supports a variety of clustering protocols. By Using it, you can cache some dynamic contents that you previously can’t do with the squid.

For example [jz]

# Video Cachingi.e Youtube / tube etc . . .

# Windows / Linux Updates / Anti-virus , Anti-Malware i.e. Avira/ Avast / MBAM etc . . .

# Well known sites i.e. facebook / google / yahoo etch. etch.

# Download caching mp3′s/mpeg/avi etc . . .

Advantages of Youtube Caching !!!

In most part of the world, bandwidth is very expensive, therefore it is (in some scenarios) very useful to Cache Youtube videos or any other flash videos, so if one of user downloads video / flash file , why again the same user or other user can’t download the same file from the CACHE, why he sucking the internet pipe for same content again n again?

Peoples on same LAN ,sometimes watch similar videos. If I put some youtube video link on on FACEBOOK, TWITTER or likewise , and all my friend will watch that video and that particular video gets viewed many times in few hours. Usually the videos are shared over facebook or other social networking sites so the chances are high for multiple hits per popular videos for my lan users / friends / zaib.

This is the reason why I wrote this article. I have implemented Ubuntu with LUSCA/ Squid on it and its working great, but to achieve some results you need to have some TB of storage drives in your proxy machine.

Disadvantages of Youtube Caching !!!

The chances, that another user will watch the same video, is really slim. if I search for something specific on youtube, i get more then hundreds of search results for same video. What is the chance that another user will search for the same thing, and will click on the same link / result? Youtube hosts more than 10 million videos. Which is too much to cache anyway. You need lot of space to cache videos. Also accordingly you will be needing ultra modern fast hardware with tons of RAM to handle such kind of cache giant. anyhow Try it

We will divide this article in following Sections

1# Installing SQUID / LUSCA in UBUNTU

2# Setting up SQUID / LUSCA Configuration files

3# Performing some Tests, testing your Cache HIT

4# Using ZPH TOS to deliver cached contents to clients vai mikrotik at full LAN speed, Bypassing the User Queue for cached contents.

.

.

1# Installing SQUID / LUSCA in UBUNTU

I assume your ubuntu box have 2 interfaces configured, one for LAN and second for WAN. You have internet sharing already configured. Now moving on to LUSCA / SQUID installation.

Here we go ….

Issue following command. Remember that its a one go command (which have multiple commands inside it so it may take a bit long to update, install and compile all required items)

apt-get update &&

apt-get install gcc -y &&

apt-get install build-essential -y &&

apt-get install libstdc++6 -y &&

apt-get install unzip -y &&

apt-get install bzip2 -y &&

apt-get install sharutils -y &&

apt-get install ccze -y &&

apt-get install libzip-dev -y &&

apt-get install automake1.9 -y &&

apt-get install acpid -y &&

apt-get install libfile-readbackwards-perl -y &&

apt-get install dnsmasq -y &&

cd /tmp &&

wget -c http://wifismartzone.com/files/linux_related/lusca/LUSCA_HEAD-r14942.tar.gz &&

tar -xvzf LUSCA_HEAD-r14942.tar.gz &&

cd /tmp/LUSCA_HEAD-r14942 &&

./configure \

--prefix=/usr \

--exec_prefix=/usr \

--bindir=/usr/sbin \

--sbindir=/usr/sbin \

--libexecdir=/usr/lib/squid \

--sysconfdir=/etc/squid \

--localstatedir=/var/spool/squid \

--datadir=/usr/share/squid \

--enable-async-io=24 \

--with-aufs-threads=24 \

--with-pthreads \

--enable-storeio=aufs \

--enable-linux-netfilter \

--enable-arp-acl \

--enable-epoll \

--enable-removal-policies=heap \

--with-aio \

--with-dl \

--enable-snmp \

--enable-delay-pools \

--enable-htcp \

--enable-cache-digests \

--disable-unlinkd \

--enable-large-cache-files \

--with-large-files \

--enable-err-languages=English \

--enable-default-err-language=English \

--enable-referer-log \

--with-maxfd=65536 &&

make &&

make install

EDIT SQUID.CONF FILE

Now edit SQUID.CONF file by using following command

nano /etc/squid/squid.conf

and Delete all previously lines , and paste the following lines.

Remember following squid.conf is not very neat and clean , you will find many un necessary junk entries in it, but as I didn’t had time to clean them all, so you may clean them as per your targets and goals.

Now Paste the following data … (in squid.conf)

#######################################################

## Squid_LUSCA configuration Starts from Here ... #

## Thanks to Mr. Safatah [INDO] for sharing Configs #

## Syed.Jahanzaib / 22nd April, 2014 #

## http://aacable.wordpress.com / aacable@hotmail.com #

#######################################################

# HTTP Port for SQUID Service

http_port 8080 transparent

server_http11 on

# Cache Pee, for parent proxy if you ahve any, or ignore it.

#cache_peer x.x.x.x parent 8080 0

# Various Logs/files location

pid_filename /var/run/squid.pid

coredump_dir /var/spool/squid/

error_directory /usr/share/squid/errors/English

icon_directory /usr/share/squid/icons

mime_table /etc/squid/mime.conf

access_log daemon:/var/log/squid/access.log squid

cache_log none

#debug_options ALL,1 22,3 11,2 #84,9

referer_log /var/log/squid/referer.log

cache_store_log none

store_dir_select_algorithm round-robin

logfile_daemon /usr/lib/squid/logfile-daemon

logfile_rotate 1

# Cache Policy

cache_mem 6 MB

maximum_object_size_in_memory 0 KB

memory_replacement_policy heap GDSF

cache_replacement_policy heap LFUDA

minimum_object_size 0 KB

maximum_object_size 10 GB

cache_swap_low 98

cache_swap_high 99

# Cache Folder Path, using 5GB for test

cache_dir aufs /cache-1 5000 16 256

# ACL Section

acl all src all

acl manager proto cache_object

acl localhost src 127.0.0.1/32

acl to_localhost dst 127.0.0.0/8

acl localnet src 10.0.0.0/8 # RFC1918 possible internal network

acl localnet src 172.16.0.0/12 # RFC1918 possible internal network

acl localnet src 192.168.0.0/16 # RFC1918 possible internal network

acl localnet src 125.165.92.1 # RFC1918 possible internal network

acl SSL_ports port 443

acl Safe_ports port 80 # http

acl Safe_ports port 21 # ftp

acl Safe_ports port 443 # https

acl Safe_ports port 70 # gopher

acl Safe_ports port 210 # wais

acl Safe_ports port 1025-65535 # unregistered ports

acl Safe_ports port 280 # http-mgmt

acl Safe_ports port 488 # gss-http

acl Safe_ports port 591 # filemaker

acl Safe_ports port 777 # multiling http

acl CONNECT method CONNECT

acl purge method PURGE

acl snmppublic snmp_community public

acl range dstdomain .windowsupdate.com

range_offset_limit -1 KB range

#===========================================================================

# Loading Patch

acl DENYCACHE urlpath_regex \.(ini|ui|lst|inf|pak|ver|patch|md5|cfg|lst|list|rsc|log|conf|dbd|db)$

acl DENYCACHE urlpath_regex (notice.html|afs.dat|dat.asp|patchinfo.xml|version.list|iepngfix.htc|updates.txt|patchlist.txt)

acl DENYCACHE urlpath_regex (pointblank.css|login_form.css|form.css|noupdate.ui|ahn.ui|3n.mh)$

acl DENYCACHE urlpath_regex (Loader|gamenotice|sources|captcha|notice|reset)

no_cache deny DENYCACHE

range_offset_limit 1 MB !DENYCACHE

uri_whitespace strip

#===========================================================================

# Rules to block few Advertising sites

acl ads url_regex -i .youtube\.com\/ad_frame?

acl ads url_regex -i .(s|s[0-90-9])\.youtube\.com

acl ads url_regex -i .googlesyndication\.com

acl ads url_regex -i .doubleclick\.net

acl ads url_regex -i ^http:\/\/googleads\.*

acl ads url_regex -i ^http:\/\/(ad|ads|ads[0-90-9]|ads\d|kad|a[b|d]|ad\d|adserver|adsbox)\.[a-z0-9]*\.[a-z][a-z]*

acl ads url_regex -i ^http:\/\/openx\.[a-z0-9]*\.[a-z][a-z]*

acl ads url_regex -i ^http:\/\/[a-z0-9]*\.openx\.net\/

acl ads url_regex -i ^http:\/\/[a-z0-9]*\.u-ad\.info\/

acl ads url_regex -i ^http:\/\/adserver\.bs\/

acl ads url_regex -i !^http:\/\/adf\.ly

http_access deny ads

http_reply_access deny ads

#deny_info http://yoursite/yourad,htm ads

#==== End Rules: Advertising ====

strip_query_terms off

acl yutub url_regex -i .*youtube\.com\/.*$

acl yutub url_regex -i .*youtu\.be\/.*$

logformat squid1 %{Referer}>h %ru

access_log /var/log/squid/yt.log squid1 yutub

# ==== Custom Option REWRITE ====

acl store_rewrite_list urlpath_regex \/(get_video\?|videodownload\?|videoplayback.*id)

acl store_rewrite_list urlpath_regex \.(mp2|mp3|mid|midi|mp[234]|wav|ram|ra|rm|au|3gp|m4r|m4a)\?

acl store_rewrite_list urlpath_regex \.(mpg|mpeg|mp4|m4v|mov|avi|asf|wmv|wma|dat|flv|swf)\?

acl store_rewrite_list urlpath_regex \.(jpeg|jpg|jpe|jp2|gif|tiff?|pcx|png|bmp|pic|ico)\?

acl store_rewrite_list urlpath_regex \.(chm|dll|doc|docx|xls|xlsx|ppt|pptx|pps|ppsx|mdb|mdbx)\?

acl store_rewrite_list urlpath_regex \.(txt|conf|cfm|psd|wmf|emf|vsd|pdf|rtf|odt)\?

acl store_rewrite_list urlpath_regex \.(class|jar|exe|gz|bz|bz2|tar|tgz|zip|gzip|arj|ace|bin|cab|msi|rar)\?

acl store_rewrite_list urlpath_regex \.(htm|html|mhtml|css|js)\?

acl store_rewrite_list_web url_regex ^http:\/\/([A-Za-z-]+[0-9]+)*\.[A-Za-z]*\.[A-Za-z]*

acl store_rewrite_list_web_CDN url_regex ^http:\/\/[a-z]+[0-9]\.google\.com doubleclick\.net

acl store_rewrite_list_path urlpath_regex \.(mp2|mp3|mid|midi|mp[234]|wav|ram|ra|rm|au|3gp|m4r|m4a)$

acl store_rewrite_list_path urlpath_regex \.(mpg|mpeg|mp4|m4v|mov|avi|asf|wmv|wma|dat|flv|swf)$

acl store_rewrite_list_path urlpath_regex \.(jpeg|jpg|jpe|jp2|gif|tiff?|pcx|png|bmp|pic|ico)$

acl store_rewrite_list_path urlpath_regex \.(chm|dll|doc|docx|xls|xlsx|ppt|pptx|pps|ppsx|mdb|mdbx)$

acl store_rewrite_list_path urlpath_regex \.(txt|conf|cfm|psd|wmf|emf|vsd|pdf|rtf|odt)$

acl store_rewrite_list_path urlpath_regex \.(class|jar|exe|gz|bz|bz2|tar|tgz|zip|gzip|arj|ace|bin|cab|msi|rar)$

acl store_rewrite_list_path urlpath_regex \.(htm|html|mhtml|css|js)$

acl getmethod method GET

storeurl_access deny !getmethod

#this is not related to youtube video its only for CDN pictures

storeurl_access allow store_rewrite_list_web_CDN

storeurl_access allow store_rewrite_list_web store_rewrite_list_path

storeurl_access allow store_rewrite_list

storeurl_access deny all

storeurl_rewrite_program /etc/squid/storeurl.pl

storeurl_rewrite_children 10

storeurl_rewrite_concurrency 40

# ==== End Custom Option REWRITE ====

#===========================================================================

# Custom Option REFRESH PATTERN

#===========================================================================

refresh_pattern (get_video\?|videoplayback\?|videodownload\?|\.flv\?|\.fid\?) 43200 99% 43200 override-expire ignore-reload ignore-must-revalidate ignore-private

refresh_pattern -i (get_video\?|videoplayback\?|videodownload\?) 5259487 999% 5259487 override-expire ignore-reload reload-into-ims ignore-no-cache ignore-private

# -- refresh pattern for specific sites -- #

refresh_pattern ^http://*.jobstreet.com.*/.* 720 100% 10080 override-expire override-lastmod ignore-no-cache

refresh_pattern ^http://*.indowebster.com.*/.* 720 100% 10080 override-expire override-lastmod reload-into-ims ignore-reload ignore-no-cache ignore-auth

refresh_pattern ^http://*.21cineplex.*/.* 720 100% 10080 override-expire override-lastmod reload-into-ims ignore-reload ignore-no-cache ignore-auth

refresh_pattern ^http://*.atmajaya.*/.* 720 100% 10080 override-expire ignore-no-cache ignore-auth

refresh_pattern ^http://*.kompas.*/.* 720 100% 10080 override-expire override-lastmod reload-into-ims ignore-no-cache ignore-auth

refresh_pattern ^http://*.theinquirer.*/.* 720 100% 10080 override-expire ignore-no-cache ignore-auth

refresh_pattern ^http://*.blogspot.com/.* 720 100% 10080 override-expire override-lastmod reload-into-ims ignore-no-cache ignore-auth

refresh_pattern ^http://*.wordpress.com/.* 720 100% 10080 override-expire override-lastmod reload-into-ims ignore-no-cache

refresh_pattern ^http://*.photobucket.com/.* 720 100% 10080 override-expire override-lastmod reload-into-ims ignore-no-cache ignore-auth

refresh_pattern ^http://*.tinypic.com/.* 720 100% 10080 override-expire override-lastmod reload-into-ims ignore-no-cache ignore-auth

refresh_pattern ^http://*.imageshack.us/.* 720 100% 10080 override-expire override-lastmod reload-into-ims ignore-no-cache ignore-auth

refresh_pattern ^http://*.kaskus.*/.* 720 100% 28800 override-expire override-lastmod reload-into-ims ignore-no-cache ignore-auth

refresh_pattern ^http://www.kaskus.com/.* 720 100% 28800 override-expire override-lastmod reload-into-ims ignore-no-cache ignore-auth

refresh_pattern ^http://*.detik.*/.* 720 50% 2880 override-expire override-lastmod reload-into-ims ignore-no-cache ignore-auth

refresh_pattern ^http://*.detiknews.*/*.* 720 50% 2880 override-expire override-lastmod reload-into-ims ignore-no-cache ignore-auth

refresh_pattern ^http://video.liputan6.com/.* 720 100% 10080 override-expire override-lastmod reload-into-ims ignore-no-cache ignore-auth

refresh_pattern ^http://static.liputan6.com/.* 720 100% 10080 override-expire override-lastmod reload-into-ims ignore-no-cache ignore-auth

refresh_pattern ^http://*.friendster.com/.* 720 100% 10080 override-expire override-lastmod ignore-no-cache ignore-auth

refresh_pattern ^http://*.facebook.com/.* 720 100% 10080 override-expire override-lastmod reload-into-ims ignore-no-cache ignore-auth

refresh_pattern ^http://apps.facebook.com/.* 720 100% 10080 override-expire override-lastmod reload-into-ims ignore-no-cache ignore-auth

refresh_pattern ^http://*.fbcdn.net/.* 720 100% 10080 override-expire override-lastmod reload-into-ims ignore-no-cache ignore-auth

refresh_pattern ^http://profile.ak.fbcdn.net/.* 720 100% 10080 override-expire override-lastmod reload-into-ims ignore-no-cache ignore-auth

refresh_pattern ^http://static.playspoon.com/.* 720 100% 10080 override-expire override-lastmod reload-into-ims ignore-no-cache ignore-auth

refresh_pattern ^http://cooking.game.playspoon.com/.* 720 100% 10080 override-expire override-lastmod reload-into-ims ignore-no-cache ignore-auth

refresh_pattern -i http://[^a-z\.]*onemanga\.com/? 720 80% 10080 override-expire override-lastmod reload-into-ims ignore-no-cache ignore-auth

refresh_pattern ^http://media?.onemanga.com/.* 720 80% 10080 override-expire override-lastmod reload-into-ims ignore-no-cache ignore-auth

refresh_pattern ^http://*.yahoo.com/.* 720 80% 10080 override-expire override-lastmod reload-into-ims ignore-no-cache ignore-auth

refresh_pattern ^http://*.google.com/.* 720 80% 10080 override-expire override-lastmod reload-into-ims ignore-no-cache ignore-auth

refresh_pattern ^http://*.forummikrotik.com/.* 720 80% 10080 override-expire override-lastmod reload-into-ims ignore-no-cache ignore-auth

refresh_pattern ^http://*.linux.or.id/.* 720 100% 10080 override-expire override-lastmod reload-into-ims ignore-no-cache ignore-auth

# -- refresh pattern for extension -- #

refresh_pattern -i \.(mp2|mp3|mid|midi|mp[234]|wav|ram|ra|rm|au|3gp|m4r|m4a)(\?.*|$) 5259487 999% 5259487 override-expire ignore-reload reload-into-ims ignore-no-cache ignore-private

refresh_pattern -i \.(mpg|mpeg|mp4|m4v|mov|avi|asf|wmv|wma|dat|flv|swf)(\?.*|$) 5259487 999% 5259487 override-expire ignore-reload reload-into-ims ignore-no-cache ignore-private

refresh_pattern -i \.(jpeg|jpg|jpe|jp2|gif|tiff?|pcx|png|bmp|pic|ico)(\?.*|$) 5259487 999% 5259487 override-expire ignore-reload reload-into-ims ignore-no-cache ignore-private

refresh_pattern -i \.(chm|dll|doc|docx|xls|xlsx|ppt|pptx|pps|ppsx|mdb|mdbx)(\?.*|$) 5259487 999% 5259487 override-expire ignore-reload reload-into-ims ignore-no-cache ignore-private

refresh_pattern -i \.(txt|conf|cfm|psd|wmf|emf|vsd|pdf|rtf|odt)(\?.*|$) 5259487 999% 5259487 override-expire ignore-reload reload-into-ims ignore-no-cache ignore-private

refresh_pattern -i \.(class|jar|exe|gz|bz|bz2|tar|tgz|zip|gzip|arj|ace|bin|cab|msi|rar)(\?.*|$) 5259487 999% 5259487 override-expire ignore-reload reload-into-ims ignore-no-cache ignore-private

refresh_pattern -i \.(htm|html|mhtml|css|js)(\?.*|$) 1440 90% 86400 override-expire ignore-reload reload-into-ims

#===========================================================================

refresh_pattern -i (/cgi-bin/|\?) 0 0% 0

refresh_pattern ^gopher: 1440 0% 1440

refresh_pattern ^ftp: 10080 95% 10080 override-lastmod reload-into-ims

refresh_pattern . 0 20% 10080 override-lastmod reload-into-ims

http_access allow manager localhost

http_access deny manager

http_access allow purge localhost

http_access deny !Safe_ports

http_access deny CONNECT !SSL_ports

http_access allow localnet

http_access allow all

http_access deny all

icp_access allow localnet

icp_access deny all

icp_port 0

buffered_logs on

acl shoutcast rep_header X-HTTP09-First-Line ^ICY.[0-9]

upgrade_http0.9 deny shoutcast

acl apache rep_header Server ^Apache

broken_vary_encoding allow apache

forwarded_for off

header_access From deny all

header_access Server deny all

header_access Link deny all

header_access Via deny all

header_access X-Forwarded-For deny all

httpd_suppress_version_string on

shutdown_lifetime 10 seconds

snmp_port 3401

snmp_access allow snmppublic all

dns_timeout 1 minutes

dns_nameservers 8.8.8.8 8.8.4.4

fqdncache_size 5000 #16384

ipcache_size 5000 #16384

ipcache_low 98

ipcache_high 99

log_fqdn off

log_icp_queries off

memory_pools off

maximum_single_addr_tries 2

retry_on_error on

icp_hit_stale on

strip_query_terms off

query_icmp on

reload_into_ims on

emulate_httpd_log off

negative_ttl 0 seconds

pipeline_prefetch on

vary_ignore_expire on

half_closed_clients off

high_page_fault_warning 2

nonhierarchical_direct on

prefer_direct off

cache_mgr aacable@hotmail.com

cache_effective_user proxy

cache_effective_group proxy

visible_hostname proxy.zaib

unique_hostname syed_jahanzaib

cachemgr_passwd none all

client_db on

max_filedescriptors 8192

# ZPH config Marking Cache Hit, so cached contents can be delivered at full lan speed via MT

zph_mode tos

zph_local 0x30

zph_parent 0

zph_option 136

.

.

SOTEURL.PL

Now We have to create an important file name storeurl.pl , which is very important and actually it does the

main job to redirect and pull video from cache.

touch /etc/squid/storeurl.pl

chmod +x /etc/squid/storeurl.pl

nano /etc/squid/storeurl.pl

Now paste the following lines, then Save and exit.

#!/usr/bin/perl

#######################################################

## Squid_LUSCA storeurl.pl starts from Here ... #

## Thanks to Mr. Safatah [INDO] for sharing Configs #

## Syed.Jahanzaib / 22nd April, 2014 #

## http://aacable.wordpress.com / aacable@hotmail.com #

#######################################################

$|=1;

while (<>) {

@X = split;

$x = $X[0] . " ";

##=================

## Encoding YOUTUBE

##=================

if ($X[1] =~ m/^http\:\/\/.*(youtube|google).*videoplayback.*/){

@itag = m/[&?](itag=[0-9]*)/;

@CPN = m/[&?]cpn\=([a-zA-Z0-9\-\_]*)/;

@IDS = m/[&?]id\=([a-zA-Z0-9\-\_]*)/;

$id = &GetID($CPN[0], $IDS[0]);

@range = m/[&?](range=[^\&\s]*)/;

print $x . "http://fathayu/" . $id . "&@itag@range\n";

} elsif ($X[1] =~ m/(youtube|google).*videoplayback\?/ ){

@itag = m/[&?](itag=[0-9]*)/;

@id = m/[&?](id=[^\&]*)/;

@redirect = m/[&?](redirect_counter=[^\&]*)/;

print $x . "http://fathayu/";

# ==========================================================================

# VIMEO

# ==========================================================================

} elsif ($X[1] =~ m/^http:\/\/av\.vimeo\.com\/\d+\/\d+\/(.*)\?/) {

print $x . "http://fathayu/" . $1 . "\n";

} elsif ($X[1] =~ m/^http:\/\/pdl\.vimeocdn\.com\/\d+\/\d+\/(.*)\?/) {

print $x . "http://fathayu/" . $1 . "\n";

# ==========================================================================

# DAILYMOTION

# ==========================================================================

} elsif ($X[1] =~ m/^http:\/\/proxy-[0-9]{1}\.dailymotion\.com\/(.*)\/(.*)\/video\/\d{3}\/\d{3}\/(.*.flv)/) {

print $x . "http://fathayu/" . $1 . "\n";

} elsif ($X[1] =~ m/^http:\/\/vid[0-9]\.ak\.dmcdn\.net\/(.*)\/(.*)\/video\/\d{3}\/\d{3}\/(.*.flv)/) {

print $x . "http://fathayu/" . $1 . "\n";

# ==========================================================================

# YIMG

# ==========================================================================

} elsif ($X[1] =~ m/^http:\/\/(.*yimg.com)\/\/(.*)\/([^\/\?\&]*\/[^\/\?\&]*\.[^\/\?\&]{3,4})(\?.*)?$/) {

print $x . "http://fathayu/" . $3 . "\n";

# ==========================================================================

# YIMG DOUBLE

# ==========================================================================

} elsif ($X[1] =~ m/^http:\/\/(.*?)\.yimg\.com\/(.*?)\.yimg\.com\/(.*?)\?(.*)/) {

print $x . "http://fathayu/" . $3 . "\n";

# ==========================================================================

# YIMG WITH &sig=

# ==========================================================================

} elsif ($X[1] =~ m/^http:\/\/(.*?)\.yimg\.com\/(.*)/) {

@y = ($1,$2);

$y[0] =~ s/[a-z]+[0-9]+/cdn/;

$y[1] =~ s/&sig=.*//;

print $x . "http://fathayu/" . $y[0] . ".yimg.com/" . $y[1] . "\n";

# ==========================================================================

# YTIMG

# ==========================================================================

} elsif ($X[1] =~ m/^http:\/\/i[1-4]\.ytimg\.com(.*)/) {

print $x . "http://fathayu/" . $1 . "\n";

# ==========================================================================

# PORN Movies

# ==========================================================================

} elsif (($X[1] =~ /maxporn/) && (m/^http:\/\/([^\/]*?)\/(.*?)\/([^\/]*?)(\?.*)?$/)) {

print $x . "http://" . $1 . "/SQUIDINTERNAL/" . $3 . "\n";

# Domain/path/.*/path/filename

} elsif (($X[1] =~ /fucktube/) && (m/^http:\/\/(.*?)(\.[^\.\-]*?[^\/]*\/[^\/]*)\/(.*)\/([^\/]*)\/([^\/\?\&]*)\.([^\/\?\&]{3,4})(\?.*?)$/)) {

@y = ($1,$2,$4,$5,$6);

$y[0] =~ s/(([a-zA-A]+[0-9]+(-[a-zA-Z])?$)|([^\.]*cdn[^\.]*)|([^\.]*cache[^\.]*))/cdn/;

print $x . "http://" . $y[0] . $y[1] . "/" . $y[2] . "/" . $y[3] . "." . $y[4] . "\n";

# Like porn hub variables url and center part of the path, filename etention 3 or 4 with or without ? at the end

} elsif (($X[1] =~ /tube8|pornhub|xvideos/) && (m/^http:\/\/(([A-Za-z]+[0-9-.]+)*?(\.[a-z]*)?)\.([a-z]*[0-9]?\.[^\/]{3}\/[a-z]*)(.*?)((\/[a-z]*)?(\/[^\/]*){4}\.[^\/\?]{3,4})(\?.*)?$/)) {

print $x . "http://cdn." . $4 . $6 . "\n";

} elsif (($u =~ /tube8|redtube|hardcore-teen|pornhub|tubegalore|xvideos|hostedtube|pornotube|redtubefiles/) && (m/^http:\/\/(([A-Za-z]+[0-9-.]+)*?(\.[a-z]*)?)\.([a-z]*[0-9]?\.[^\/]{3}\/[a-z]*)(.*?)((\/[a-z]*)?(\/[^\/]*){4}\.[^\/\?]{3,4})(\?.*)?$/)) {

print $x . "http://cdn." . $4 . $6 . "\n";

# acl store_rewrite_list url_regex -i \.xvideos\.com\/.*(3gp|mpg|flv|mp4)

# refresh_pattern -i \.xvideos\.com\/.*(3gp|mpg|flv|mp4) 1440 99% 14400 override-expire override-lastmod ignore-no-cache ignore-private reload-into-ims ignore-must-revalidate ignore-reload store-stale

# ==========================================================================

} elsif ($X[1] =~ m/^http:\/\/.*\.xvideos\.com\/.*\/([\w\d\-\.\%]*\.(3gp|mpg|flv|mp4))\?.*/){

print $x . "http://fathayu/" . $1 . "\n";

} elsif ($X[1] =~ m/^http:\/\/[\d]+\.[\d]+\.[\d]+\.[\d]+\/.*\/xh.*\/([\w\d\-\.\%]*\.flv)/){

print $x . "http://fathayu/" . $1 . "\n";

} elsif ($X[1] =~ m/^http:\/\/[\d]+\.[\d]+\.[\d]+\.[\d]+.*\/([\w\d\-\.\%]*\.flv)\?start=0/){

print $x . "http://fathayu/" . $1 . "\n";

} elsif ($X[1] =~ m/^http:\/\/.*\.youjizz\.com.*\/([\w\d\-\.\%]*\.(mp4|flv|3gp))\?.*/){

print $x . "http://fathayu/" . $1 . "\n";

} elsif ($X[1] =~ m/^http:\/\/[\w\d\-\.\%]*\.keezmovies[\w\d\-\.\%]*\.com.*\/([\w\d\-\.\%]*\.(mp4|flv|3gp|mpg|wmv))\?.*/){

print $x . "http://fathayu/" . $1 . $2 . "\n";

} elsif ($X[1] =~ m/^http:\/\/[\w\d\-\.\%]*\.tube8[\w\d\-\.\%]*\.com.*\/([\w\d\-\.\%]*\.(mp4|flv|3gp|mpg|wmv))\?.*/) {

print $x . "http://fathayu/" . $1 . "\n";

} elsif ($X[1] =~ m/^http:\/\/[\w\d\-\.\%]*\.youporn[\w\d\-\.\%]*\.com.*\/([\w\d\-\.\%]*\.(mp4|flv|3gp|mpg|wmv))\?.*/){

print $x . "http://fathayu/" . $1 . "\n";

} elsif ($X[1] =~ m/^http:\/\/[\w\d\-\.\%]*\.spankwire[\w\d\-\.\%]*\.com.*\/([\w\d\-\.\%]*\.(mp4|flv|3gp|mpg|wmv))\?.*/) {

print $x . "http://fathayu/" . $1 . "\n";

} elsif ($X[1] =~ m/^http:\/\/[\w\d\-\.\%]*\.pornhub[\w\d\-\.\%]*\.com.*\/([[\w\d\-\.\%]*\.(mp4|flv|3gp|mpg|wmv))\?.*/){

print $x . "http://fathayu/" . $1 . "\n";

} elsif ($X[1] =~ m/^http:\/\/[\w\d\-\_\.\%\/]*.*\/([\w\d\-\_\.]+\.(flv|mp3|mp4|3gp|wmv))\?.*cdn\_hash.*/){

print $x . "http://fathayu/" . $1 . "\n";

} elsif (($X[1] =~ /maxporn/) && (m/^http:\/\/([^\/]*?)\/(.*?)\/([^\/]*?)(\?.*)?$/)) {

print $x . "http://fathayu/" . $1 . "/SQUIDINTERNAL/" . $3 . "\n";

} elsif (($X[1] =~ /fucktube/) && (m/^http:\/\/(.*?)(\.[^\.\-]*?[^\/]*\/[^\/]*)\/(.*)\/([^\/]*)\/([^\/\?\&]*)\.([^\/\?\&]{3,4})(\?.*?)$/)) {

@y = ($1,$2,$4,$5,$6);

$y[0] =~ s/(([a-zA-Z]+[0-9]+(-[a-zA-Z])?$)|([^\.]*cdn[^\.]*)|([^\.]*cache[^\.]*))/cdn/;

print $x . "http://fathayu/" . $y[0] . $y[1] . "/" . $y[2] . "/" . $y[3] . "." . $y[4] . "\n";

} elsif (($X[1] =~ /media[0-9]{1,5}\.youjizz/) && (m/^http:\/\/(.*?)(\.[^\.\-]*?\.[^\/]*)\/(.*)\/([^\/\?\&]*)\.([^\/\?\&]{3,4})(\?.*?)$/)) {

@y = ($1,$2,$4,$5);

$y[0] =~ s/(([a-zA-Z]+[0-9]+(-[a-zA-Z])?$)|([^\.]*cdn[^\.]*)|([^\.]*cache[^\.]*))/cdn/;

print $x . "http://fathayu/" . $y[0] . $y[1] . "/" . $y[2] . "." . $y[3] . "\n";

# ==========================================================================

# Filehippo

# ==========================================================================

} elsif (($X[1] =~ /filehippo/) && (m/^http:\/\/(.*?)\.(.*?)\/(.*?)\/(.*)\.([a-z0-9]{3,4})(\?.*)?/)) {

@y = ($1,$2,$4,$5);

$y[0] =~ s/[a-z0-9]{2,5}/cdn./;

print $x . "http://fathayu/" . $y[0] . $y[1] . "/" . $y[2] . "." . $y[3] . "\n";

} elsif (($X[1] =~ /filehippo/) && (m/^http:\/\/(.*?)(\.[^\/]*?)\/(.*?)\/([^\?\&\=]*)\.([\w\d]{2,4})\??.*$/)) {

@y = ($1,$2,$4,$5);

$y[0] =~ s/([a-z][0-9][a-z]dlod[\d]{3})|((cache|cdn)[-\d]*)|([a-zA-Z]+-?[0-9]+(-[a-zA-Z]*)?)/cdn/;

print $x . "http://fathayu/" . $y[0] . $y[1] . "/" . $y[2] . "." . $y[3] . "\n";

} elsif ($X[1] =~ m/^http:\/\/.*filehippo\.com\/.*\/([\d\w\%\.\_\-]+\.(exe|zip|cab|msi|mru|mri|bz2|gzip|tgz|rar|pdf))/){

$y=$1;

for ($y) {

s/%20//g;

}

print $x . "http://fathayu//" . $y . "\n";

} elsif (($X[1] =~ /filehippo/) && (m/^http:\/\/(.*?)\.(.*?)\/(.*?)\/(.*)\.([a-z0-9]{3,4})(\?.*)?/)) {

@y = ($1,$2,$4,$5);

$y[0] =~ s/[a-z0-9]{2,5}/cdn./;

print $x . "http://fathayu/" . $y[0] . $y[1] . "/" . $y[2] . "." . $y[3] . "\n";

# ==========================================================================

# 4shared preview

# ==========================================================================

} elsif ($X[1] =~ m/^http:\/\/[a-z]{2}\d{3}\.4shared\.com\/img\/\d+\/\w+\/dlink__2Fdownload_2F.*_3Ftsid_(\w+)-\d+-\w+_26lgfp_3D1000_26sbsr_\w+\/preview.mp3/) {

print $x . "http://fathayu/" . $3 . "\n";

} else {

print $x . $X[1] . "\n";

}

}

sub GetID

{

$id = "";

use File::ReadBackwards;

my $lim = 200 ;

my $ref_log = File::ReadBackwards->new('/var/log/squid/referer.log');

while (defined($line = $ref_log->readline))

{

if ($line =~ m/.*youtube.*\/watch\?.*v=([a-zA-Z0-9\-\_]*).*\s.*id=$IDS[0].*/){

$id = $1;

last;

}

if ($line =~ m/.*youtube.*\/.*cpn=$CPN[0].*[&](video_id|docid|v)=([a-zA-Z0-9\-\_]*).*/){

$id = $2;

last;

}

if ($line =~ m/.*youtube.*\/.*[&?](video_id|docid|v)=([a-zA-Z0-9\-\_]*).*cpn=$CPN[0].*/){

$id = $2;

last;

}

last if --$lim <= 0;

}

if ($id eq ""){

$id = $IDS[0];

}

$ref_log->close();

return $id;

}

### STOREURL.PL ENDS HERE ###

INITIALIZE CACHE and LOG FOLDER …

Now create CACHE folder (here I used test in local drive)

# Log Folder and assign permissions

mkdir /var/log/squid

chown proxy:proxy /var/log/squid/

# Cache Folder

mkdir /cache-1

chown proxy:proxy /cache-1

#Now initialize cache dir by

squid -z

START SQUID SERVICE

Now start SQUID service by following command

squid

and look for any error or termination it may get. If all ok, just press enter few times and you will be back to command prompt.

to verify if squid is running ok issue following command and look for squid instance, there should be 10+ instance for the squid process

ps aux |grep squid

Something like below …

![123]()

TIP:

To start SQUID Server in Debug mode, to check any erros, use following

squid -d1N

TEST time ….

It’s time to hit the ROAD and do some tests….

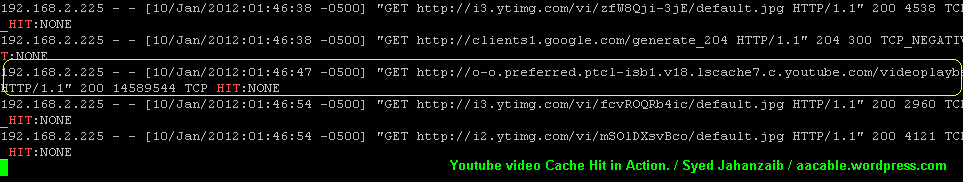

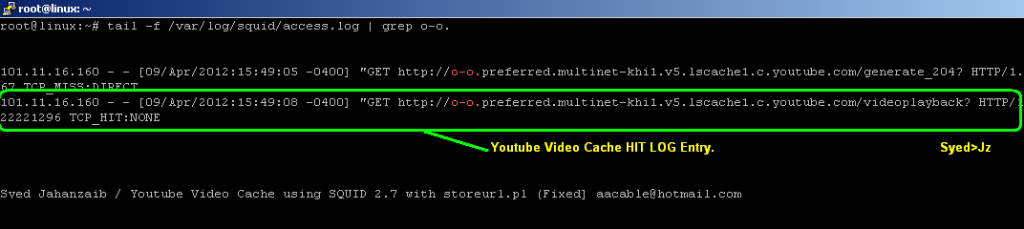

YOUTUBE TEST

Open Youtube and watch any Video. After complete download, Check the same video from another client. You will notice that it download very quickly (youtueb video is saved in chunks of 1.7mb each, so after completing first chunk, it will stop, if a user continue to watch the same video, it will serve second chunk and so on , you can watch the bar moving fast without using internet data.

As Shown in the example Below . . .

![lusca_test]() .

.

![YT cache HIT]() .

.

.

FILEHIPPO TEST [ZAIB]

![FILHIPPO]()

As Shown in the example Below . . .

![FILHIPPO]()

![Youtube-Video_cache_HIT-2nd-client]()

![YT-Cache-HIT]()

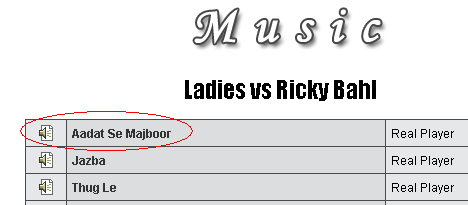

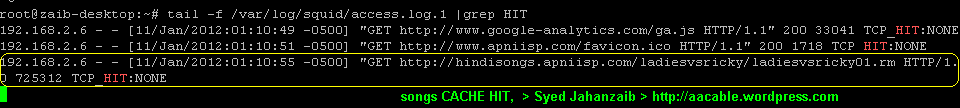

MUSIC DOWNLOAD TEST

Now test any music download. For example Go to

http://www.apniisp.com/songs/indian-movie-songs/ladies-vs-ricky-bahl/690/1.html

As Shown in the example Below . . .

![apniisp download]()

and download any song , after its downloaded, goto 2nd client pc, and download the same song, and monitor the Squid access LOG. You will see cache hit TPC_HIT for this song.

As Shown in the example Below . . .

![apniisp_log_cache_hit]()

EXE / PROGRAM DOWNLOAD TEST

Now test any .exe file download.

Goto http://www.rarlabs.com and download any package. After Download completes, goto 2nd client pc , and download the same file again. and monitor the Squid access LOG. You will see cache hit TPC_HIT for this file.

As Shown in the example Below . . .

![winrar-download-page]()

SQUID LOGS

![exe_file_cache_HIT]()

Other methods are as follow (I will update following (squid 2.7) article soon)

http://aacable.wordpress.com/2012/01/19/youtube-caching-with-squid-2-7-using-storeurl-pl/

http://aacable.wordpress.com/2012/08/13/youtube-caching-with-squid-nginx/

.

.

.

MIKROTIK with SQUID/ZPH: how to bypass Squid Cache HIT object with Queues Tree in RouterOS 5.x and 6.x

.

![zph]()

.

Using Mikrotik, we can redirect HTTP traffic to SQUID proxy Server, We can also control user bandwidth, but its a good idea to deliver the already cached content to user at full lan speed, that’s why we setup cache server for, to save bandwidth and have fast browsing experience , right :p , So how can we do it in mikrotik that cache content should be delivered to users at unlimited speed, no queue on cache content. Here we go.

By using ZPH directives , we will mark cache content, so that it can later pick by Mikrotik.

Basic requirement is that Squid must be running in transparent mode, can be done via iptables and squid.conf directives.

I am using UBUNTU squid 2.7 , (in ubuntu , apt-get install squid will install squid 2.7 by default which is gr8 for our work)

Add these lines in SQUID.CONF

#===============================================================================

#ZPH for SQUID 2.7 (Default in ubuntu 10.4) / Syed Jahanzaib aacable@hotmail.com

#===============================================================================

tcp_outgoing_tos 0x30 lanuser [lanuser is ACL for local network, change it to match your's]

zph_mode tos

zph_local 0x30

zph_parent 0

zph_option 136

Use following if you have squid 3.1.19

#======================================================

#ZPH for SQUID 3.1.19 (Default in ubuntu 12.4) / Syed Jahanzaib aacable@hotmail.com

#======================================================

# ZPH for Squid 3.1.19

qos_flows local-hit=0x30

That’s it for SQUID, Now moving on to Mikrotik box ,

Add following rules,

↓

↓

# Marking packets with DSCP (for MT 5.x) for cache hit content coming from SQUID Proxy

/ip firewall mangle add action=mark-packet chain=prerouting disabled=no dscp=12 new-packet-mark=proxy-hit passthrough=no comment="Mark Cache Hit Packets / aacable@hotmail.com"

/queue tree add burst-limit=0 burst-threshold=0 burst-time=0s disabled=no limit-at=0 max-limit=0 name=pmark packet-mark=proxy-hit parent=global-out priority=8 queue=default

↓

↓

# Marking packets with DSCP (for MT 6.x) for cache hit content coming from SQUID Proxy

/ip firewall mangle add action=mark-packet chain=prerouting comment="MARK_CACHE_HIT_FROM_PROXY_ZAIB" disabled=no dscp=12 new-packet-mark=proxy passthrough=no

/queue simple

add max-limit=100M/100M name="ZPH-Proxy Cache Hit Simple Queue / Syed Jahanzaib >aacable@hotmail.com" packet-marks=zph-hit priority=1/1 target="" total-priority=1

MAKE SURE YOU MOVE THE SIMPLE QUEUE ABOVE ALL OTHER QUEUES :D

.

Now every packet which is marked by SQUID CACHE_HIT, will be delivered to user at Full lan speed, rest of traffic will be restricted by user Queue.

↓

↓

TROUBLESHOOTING:

the above config is fully tested with UBUNTU SQUID 2.7 and FEDORA 10 with LUSCA

Make sure your squid is marking TOS for cache hit packets. You can check it via TCPDUMP

tcpdump -vni eth0 | grep ‘tos 0×30′

↓

(eht0 = LAN connected interface)

Can you see something like ???

tcpdump: listening on eth0, link-type EN10MB (Ethernet), capture size 96 bytes

20:25:07.961722 IP (tos 0×30, ttl 64, id 45167, offset 0, flags [DF], proto TCP (6), length 409)

20:25:07.962059 IP (tos 0×30, ttl 64, id 45168, offset 0, flags [DF], proto TCP (6), length 1480)

192 packets captured

195 packets received by filter

0 packets dropped by kernel

_________________________________

↓

↓

Regard’s

SYED JAHANZAIB

Regard’s

SYED JAHANZAIB

http://aacable.wordpress.com

Filed under:

Linux Related,

Mikrotik Related ![]()

![]()

![]()